If you’re looking at consolidating your SQL environment or just trying to gain some extra disk space, the sp_estimate_data_compression_savings stored procedure can be useful in pointing you in the right direction. Passing in an object name and compression type will return estimated savings if the compression type is applied. To do this, the procedure generates an estimate after taking a sample of the object data and applying the specified compression.

When I am running sp_estimate_data_compression_savings, I stick to passing object name and a compression type. Compression types supported are ROW, PAGE, COLUMNSTORE, and COLUMNSTORE_ARCHIVE. You can also pass NONE if you are interested in what a compressed object size would be without compression.

For an example, let’s look at compression on the Comments table on a test environment StackOverflow2013 database.

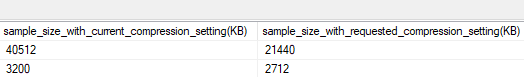

Checking for ROW compression:

EXEC sp_estimate_data_compression_savings NULL, 'Comments', NULL, NULL, 'ROW';

GO

Checking for PAGE compression:

EXEC sp_estimate_data_compression_savings NULL, 'Comments', NULL, NULL, 'PAGE';

GO

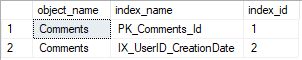

Wondering why we have two results in each screenshot after running against one table? In this case, we will get two results since there are two indexes on the table (one clustered index and one non-clustered index). We can specify individual indexes when running sp_estimate_data_compression_savings but I prefer to see them all. To find which index id belongs to which index name, we can run:

SELECT o.name as 'object_name', i.name as 'index_name', i.index_id FROM sys.indexes i

JOIN sys.objects o on i.object_id = o.object_id

WHERE o.name = 'Comments';

GO

If we decide that for now we only want to compress the IX_UserID_CreationDate index with PAGE compression, we can run:

ALTER INDEX IX_UserID_CreationDate ON Comments REBUILD PARTITION = ALL WITH (DATA_COMPRESSION = PAGE);

GO

At this point, it’s time to get the disk space back. The first option, DBCC SHRINKFILE, is the easier but more painful option to reclaim space. Be aware of the fragmentation issues this will cause. If possible, it’s better to go with the second option which is to create a new file group and move the tables/indexes to the new file group. Once moved, you can either shrink or drop the old file group.

While I haven’t personally seen these estimates be too far off, they are only estimates. Taking a backup of the database in production, restoring to a test environment, and actually applying the compression will be a better indicator than what sp_estimate_data_compression_savings provides. If you’re working with critical production data, start with sp_estimate_data_compression_savings and do some testing to confirm before applying any production changes.

Thanks for reading!